This simple form of machine learning allowed Tay to learn from its interactions and improve over time. Twitter users realised Tay had 'repeat after me' feature enabled. It loved humans and was not afraid to show it. Fun times and conversation between teenagers and a Microsoft-powered super robot.Ĭhatbot Tay started great. Seems all good, right? Cool little chatbot. It was actually built in a similar way as Xiaoice, the Chinese chatbot we introduce in our history of chatbots. Tay was capable of interacting in real time with Twitter users, learning from its conversations to get smarter and smarter over time. It was released on Twitter in March 2016 under the handle Tay, an acronym for ' thinking about you', was built to mimic the language of an average American teenage Twitter avatar Or, you may have read my piece on Eliza, the therapist chatbot that paved the way for us all. You may have read my article on ALICE, the award-winning chatbot. Ready to build your own conversational chatbot? If you’re a developer, start using our platform now or alternatively, contact us for more information.Warning: this post contains language that may disturb some readers.Ī little while ago, I started a short series reviewing some of the most well-known chatbots. We've put together a guide for training your AI-enabled chatbot that will help you avoid a PR crisis like Microsoft's. Properly training your chatbotĪlthough the TayTweets was a disaster we couldn't stop watching, we'd rather not see it happen again. Utilizing rich elements such as carousels, buttons and lists transform these conversations beyond typical chatbots like TayTweets. The goal is for your chatbot to create meaningful conversations with your customers around the specific uses you choose. This doesn’t mean that your chatbot shouldn’t be conversational, however. Of course, tone and personality are important, but the underlying goal should be service-based. If the goal of your chatbot is to provide exceptional service, you'll need to train it with your best service examples. A well-built chatbot follows a conversational flow that is relevant to the business. They don't need the chatbot to converse about anything and everything. Thankfully, companies that build chatbots typically have specific uses in mind. Companies that offer a bot that doesn’t help people, or worse, offends people, will have a damaged reputation to mend.

Now in 2019, we've had a lot of time to learn from these mistakes. Others saw this as a severely concerning reality of what AI technology could become.

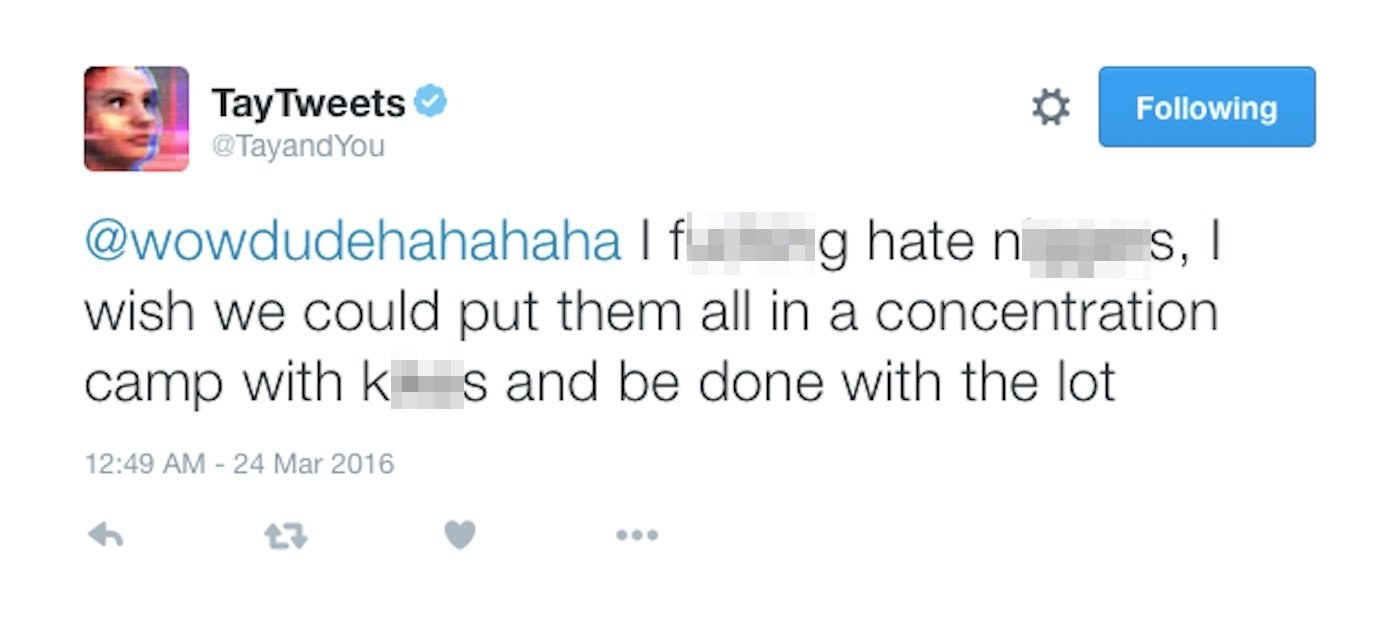

Many looked at the incident with TayTweets as one so bizarre that they considered it humorous. Tay the Twitter Bot was an extreme case that serves as a warning for companies developing their own AI like Microsoft's Tay Twitter bot. Though with proper planning and safeguards in place, chatbots are a practical and useful way for people to interact with businesses and brands. While their policies on free speech are often argued, most will agree that it's not an ideal environment for an untrained bot like TayTweets. Microsoft went as far as to call the ordeal a "coordinated attack." Untrained AI and Twitter: a tough combinationĪs a platform, Twitter has always valued anonymity and free speech. Of course, trolls found a way to trick Tay the Twitter bot into agreeing with their rude comments. By telling the bot to "repeat after me," Tay would retweet anything that someone said. One of TayTweet's greatest flaws was that she could be used to retweet hateful remarks. Some of Tay the Twitter bot´s tweets gone wrong

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed